What is Quality of Service (QoS)?

Quality of Service (QoS) - also known as Traffic Control - allows network administrators to manage the flow of traffic and distribute network resources effectively. This technology prioritizes certain types of data, applications, or users over others, ensuring that the most important data gets through even during times of high network congestion. QoS can make problems with real-time communication, like choppy video and dropped calls, a thing of the past.

How does QoS work?

Imagine the data in your business network as cars in a bustling city, each heading to different destinations. During rush hours, traffic snarls up, causing delays. This is where Quality of Service (QoS) steps in, functioning like a skilled traffic officer to control the flow of data on your network.

What does QoS mean for your business? It ensures the most critical business data gets priority when the network is overwhelmed. This could be prioritizing a video call with a potential investor over employees’ file downloads or other non-time-critical Internet activities.

When you equip your network switches and routers with QoS, this fundamentally means they classify data packets and queue them appropriately. They can also proactively avoid congestion. Let's unpack each of these aspects.

Classification

Classification involves marking packets according to their importance. For instance, video conferencing data might be marked as high priority, while casual browsing data could be marked as low priority. This way, the switch or router knows what data needs to go first.

Classification typically involves examining data packets and assigning them to different traffic classes based on certain criteria, which might include:

- Source or Destination IP Address: The IP address can help identify if the packet belongs to a critical service or a high-priority user.

- Application Type: Certain applications, such as VoIP or video conferencing, can be given priority due to their real-time and sensitive nature.

- Protocol Type: Different TCP or UDP protocols can be used for classification.

- Ports: Specific port numbers associated with particular applications can be used for prioritizing those services.

- VLANs: Data from particular VLANs may have higher priority.

Once packets are classified, they're marked with a specific identifier. This could use Differentiated Services Code Point (DSCP) or Class of Service (CoS). This identifier will then be used to determine the priority of the packet as it travels through the network.

Queuing

Queuing refers to the process of sorting these classified packets into different queues based on their priority. During times of congestion, high-priority queues are emptied before low-priority ones, making sure critical business operations continue smoothly. QoS lets you give different weightings to different queues, to balance traffic effectively.

There are several common queuing techniques used in QoS:

- Priority Queuing (PQ): In this method, also called strict priority queuing, packets are organized into different priority queues, and packets in the highest priority queue are always sent first. While this method guarantees high-priority traffic is served first, it can lead to lower-priority traffic being ignored or "starved" during peak traffic times, as it only services the other queues when the higher priority ones are empty.

- Weighted Fair Queuing (WFQ): Also known as weighted round robin queuing, this aims to provide a more balanced approach, where each queue gets a certain amount of bandwidth based on its weight. This ensures that all queues get some bandwidth, preventing starvation of low-priority traffic.

- Low Latency Queuing (LLQ): LLQ, or mixed scheduling, combines the best aspects of strict priority and round-robin queuing. It provides at least one priority queue for delay-sensitive traffic like VoIP but uses round robin queuing on other queues, to ensure that other traffic is not starved. This method is often used in networks with a mix of real-time and non-real-time traffic.

Preventing congestion

The main technique QoS uses for congestion avoidance is Random Early Detection (RED). RED is an algorithm that proactively drops packets before the queue is completely full to prevent congestion. The goal is to signal to the sender to slow down before the network gets overloaded, through TCP’s congestion control mechanism. When the network traffic starts to get heavy, RED begins to randomly drop packets, encouraging the source to reduce its transmission rate. RED is highly configurable, so you have control over what kind of packets get randomly dropped.

In conclusion

The implementation of QoS can bring significant benefits to your business. It ensures that vital applications have the bandwidth they need, reduces packet loss, and gives you more control over network resource allocation. Essentially, it's like having a sophisticated digital traffic management system that ensures smooth operation.

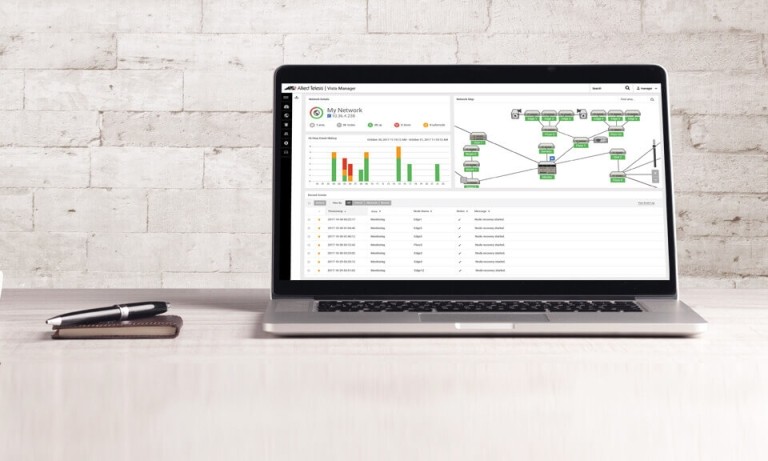

However, to optimize QoS, you need to have a comprehensive understanding of your business's network needs. This involves identifying which applications or data are of utmost importance and adjusting the settings to keep up with changes in your network usage. This can be complex, but Vista Manager from Allied Telesis helps make it simple, by giving you an at-a-glance view of congestion and an intuitive tool for changing queue settings.